I would like to discuss a dilemma between near science fiction predictions of development of AI and grounded practical applications of AI.

Gemini or any other LLM does NOT take credit for the contents of the blog post, though!

First of all, it is completely undeniable that AI is changing our lives and will have a transformative effect on the future. We can argue that humanity has lived through many transformative events over and over again: invention of fire, agriculture, writing, electricity, industrialization, information technologies, so AI can be seen as just one more invention on our part. Now, is AI really just one more invention or something that would absolutely change what it is to be a human as we know it? Is this our last invention?

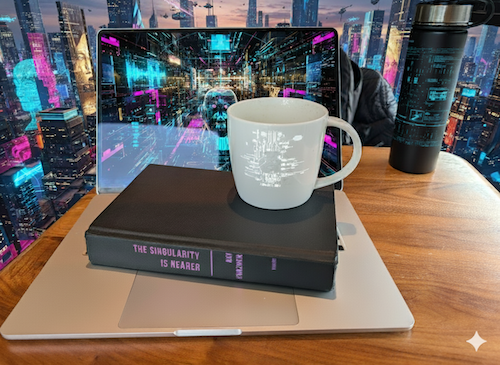

I just finished reading the book “The Singularity is Nearer”. The book is arguing that we will eventually extend the capabilities of our biological brains and go beyond the limits of our organic bodies. At first we would come up with inventions that would greatly extend and improve our lives (reaching “longevity escape velocity” in mid 30s) and that we will build brain-computer interfaces (think of phones now, AR glasses or something of the like next, brain implants next, nanorobots next, with eventual consciousness upload to the information network). As another book “Homo Deus” (my review) argues – we eventually become god-like and gain the ability to control life and environment and Homo Sapiens go extinct. We might eventually lose our carbon-based existence and just become information.

To my way of thinking, while much of that, like nanorobots repairing our bodies, may sound like science fiction, as long as it doesn’t break the laws of physics I’m on board that it can and may happen.

Now, let’s look at some more practical examples.

- From transportation technology: Crossing the Atlantic would be 6 weeks sailing in the 1800s, 6 days in early 1900s with fast liners, 1950s – 8 hours by plane, 1970s – concorde doing that in <4h, and technically 90min possible from anywhere on planet to anywhere by rockets, but we are still flying boring 8h from London to NY.

- Military: We came up with ever larger nuclear bombs, but post “Tsar Bomba” in 1961 it simply doesn’t make sense to make any larger ones.

- Digital: digital camera resolution, audio quality, single processor’s clock speed, and many more examples where more has diminishing returns and becomes impractical.

This same pattern of hitting a practical limit is not just a historical curiosity because I can see it already happening in the world of AI. Let’s have a look at some examples:

- LLMs are now reaching the plateau of information saturation where they basically learned everything there is to learn from the internet.

- Vibe coding is mostly hype in my opinion. Yes, I do vibe-coding as well for fun, like my previous post about doing 3h multiplayer typing game, and it is a huge productivity booster, but I believe it fundamentally is like many other tools [post pending] – having logarithmic benefits – huge at the beginning and eventually ever more diminishing.

- Plateau in image recognition. This once was a grand challenge of computer science, and is now a largely solved problem for most practical purposes, but pushing models to 99.9% accuracy is not practical.

- Parameter count race. All those 7B to 70B to 1T parameter models. There is no point in multi-T models and the cost is just not worth it. I recently ran 7B LLM model on my mac air and it is not that bad at all.

My point is that in many individual fields where AI is applicable we will be reaching the some kind of optimal point between theoretical possibility and practical application. In the process we will be seeing major transformations, like the entire sector of jobs associated with driving will be replaced by self-driving vehicles. There is a good chance this could create socio-economic disruptions and ripple effects. Just imagine, some rich “haves” can give their child superpowers while some poor “have nots” could not afford that. But I agree to the point that this is only “in-transite”, because now people in some poor countries can afford a phone that would be multi-billion worth of technology if this was mid last century.

My own predictions are that:

- AGI is still very far away, a much longer time-frame than “The singularity is nearer” is arguing for. Maybe 10-30 years from now.

- Each and every AI application will reach its optimal practical point.

- Human lives will improve as they did with other technologies.

- Software engineering jobs won’t get extinct, but they will transform and we need to adopt.

- I will die, but someone born next century might not.

codemore code

~~~~