November 29, 2025 AI, Personal No comments

AI Toolkit Research for Software Engineer

Disclaimer: I do not promote any of the tooling mentioned in this blog post nor have I used the vast majority of them. All of the text written by me is my personal opinion. All of the text generated by LLM is its creation – I will be explicit where it is LLM and where it is me.

Unlike most posts where I make emphasis on my own experience and writing by myself, this blog post will be assisted with LLM, but in a good way. I want to create a comprehensive AI toolset list to explore for myself. Although it will have some tools very specific to my personal needs (like climbing) I believe you can apply the same approach to get your ideal list. This could have been LinkedIn post “Definite list of AI tools you must use” but I cannot stand those posts. This is just idea on how you can come up with your own list:

Step 1: Refine research plan

Prompt: “Refine this prompt: Perform research on the landscape of currently available LLM products and AI tooling for both professional and personal development for the user profile and wider audience, using referenced examples of already used tooling. User Profile: A Software Engineer at a big tech company in the Seattle area. Family with two kids, sports (climbing, Muay Thai, running), writing blog posts, coding for fun, and wondering about life in general. Researching topics such as: health, finance, 3d printing, writing about tech, coding, taxes, parenting, relationships, career decisions, trip planning, real estate, etc. Reference examples: ChatGPT, Gemini (deep research, chat, image gen), Claude Sonet for coding, locally running small LLMs, trying out different AI startups for intermittent tasks.”

The above text was me. The below text is the proposed research plan by LLM:

(1) Research the current landscape of major LLM products (such as ChatGPT, Gemini, Claude) and emerging AI startups in late 2025, specifically analyzing their capabilities for professional software engineers (coding agents, debugging, architecture design).

(2) Investigate the ecosystem of open-source and locally executable LLMs (compatible with Ollama) and privacy-focused tools suitable for a tech-savvy user running consumer hardware.

(3) Search for specialized AI tooling and applications tailored to the user's specific hobbies and creative interests:

(a) Sports performance analysis for climbing

(b) Sports performance analysis for Muay Thai

(c) Sports performance analysis for running

(d) 3D printing modeling and slicing optimization

(e) Technical blogging and creative writing assistance

(4) Find AI resources and tools assisting life management, covering:

(a) Parenting, education, and kids' activities in the Seattle/Bellevue area

(b) Real estate market trends and housing analysis in Vancouver, BC

(c) Personal finance, tax preparation, and investment tracking in the Seattle/Bellevue area

(5) Explore AI interfaces designed for broad life topics, such as philosophical conversation, relationship coaching, career development planning, and travel logistics.

(6) Synthesize the findings to categorize tools by professional utility versus personal development, comparing them against the user's current stack (ChatGPT, Gemini, Claude, Ollama) to identify gaps and superior alternatives.

Step 2: Run multiple researches and synthesize takeaways

I’ve run 4 reports with the above prompt or some variations of it. In the end I got four 20 page documents. After skimming through them it is obvious that either: a) some info is outdated, like recommending Claude Sonet 3.5 when I’m already using 4.5; b) recommendations are too generic; c) some sections were too verbose and some too short for my needs; d) some recommendations are contradictory, for instance using Ollama for privacy and then suggesting some startups that suggest uploading private data to small startups.

One idea I had was to feed all documents into another research specifically asking for summary with bullet points. Unfortunately the result was a 5th document of not better quality. Another approach I took was: “Create a maximum 5 pages summary from the four similar documents. Do bullet points. Keep links to websites. Do NOT do research.” This gave much better results.

Step 3: Edit the result

So I’ve got approximately what I need. The next step was to iterate a few times on the list. E.g. I asked LLM to add a list of general health and longevity tooling following the same format. Copy-paste, read through, and add more sections. The appendix text in the end is the list by AI, with minor edits from me.

Step 4: Action Plan

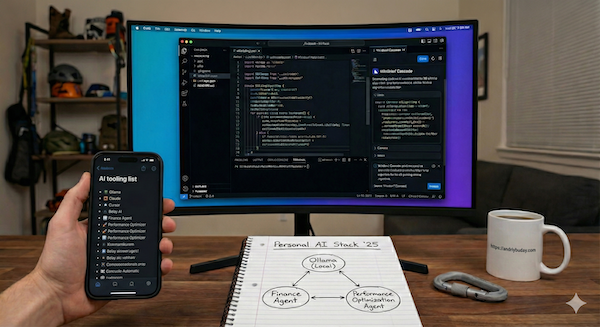

It is obvious that I won’t be trying all of the tooling (that would be crazy) but to do exploration of what’s available and within my area of interest. As an action plan I highlighted some tooling to use and play with below or use more actively:

- llms.txt – a file to be added to this blog post so AI knows how to read it.

- Ollama: The CLI standard for running local models. Already using it, but probably use even more. (got M4 apple processor with 32Gb memory so some LLMs run just fine).

- NotebookLM: Google’s AI-Powered research partner. Have seen demos of this one and should try.

- Belay AI: analyzing center of mass and hip trajectory when climbing. Didn’t know such a thing existed. Would be curious to try out.

- Garmy: Python library and MCP server linking Garmin data to Claude Desktop or Cursor for agentic analysis. Sounds like something I would like to play with next.

- Meshy: Converting prompts to 3D models. Already tried but wan’t too happy with the results. Will give it another try.

- Layla & Wanderlog: AI travel agents. Used general LLMs for my travel planning before but will be curious to try tailored tool.

- Orai: Pocket AI coach analyzing recordings for filler words, energy, and clarity.

Conclusion

I believe the AI and LLM tooling landscape is very saturated. There is a tool or a startup for almost anything you can think of. The point is not the specific list but how I arrived at it and how it is tailored to my needs. In this blog post I provided a method at arriving at your own list of AI tooling that is applicable specifically to you.

Alert: wall of text below.

APPENDIX: THE RESULT

Disclaimer: I am not advertising or promoting any of the tooling below, have no affiliation to any of the companies or products mentioned. The text below is generated by LLM. I only reviewed it.

Executive Summary: The Shift to Agentic Workflows

The technological paradigm has shifted from “Chatbots” (passive Q&A) to “Agents” (active execution). The competitive advantage in 2025 belongs to the “Augmented Architect” who orchestrates specialized AI entities to manage full-stack development, complex financial engineering, and physical performance.

1. The Professional Engineering Workbench

The modern workflow bifurcates into Integrated Agents (living in the IDE) and Headless/Terminal Agents (operating autonomously).

The Battle for the IDE: Cursor vs. Windsurf vs. Copilot

- Cursor: The “Architect’s Instrument”.

- Best For: Heavy refactoring and legacy codebases.

- Key Feature: “Composer” and “Shadow Workspace” index the entire codebase to predict multi-line edits and handle global refactors.

- Model: Uses Claude 3.5 Sonnet for superior code structure nuance.

- Pricing: $20/month; generally preferred by power users over Copilot.

- Windsurf: The “Flow State” Facilitator.

- Best For: Greenfield projects and rapid prototyping.

- Key Feature: “Cascade” flow tracks user actions (terminal commands, clipboard) to infer intent, actively running tests and fixing errors.

- Differentiation: Focuses on keeping the developer in “flow” rather than granular control.

- GitHub Copilot: The Enterprise Incumbent.

- Best For: Corporate environments with strict compliance needs.

- Status: Now includes “Agent Mode,” but critics note the chat often feels disconnected from the editor compared to AI-native rivals.

Command Line & Autonomous Agents

- Claude Code (CLI): A terminal-resident agent that replaces the chat interface. It can navigate directories, read files, execute Unix commands, and handle large-scale refactoring.

- Cline / Roo Code: Open-source VS Code extensions that act as “Headless Developers.” They can execute terminal commands and create files autonomously, allowing for a “Bring Your Own Key” (BYOK) model.

- Deep Think Models: Google’s Gemini 2.5 Pro utilizes “parallel hypothesis testing,” allocating a “thinking budget” to simulate System 2 thinking for architectural reviews and debugging race conditions.

The Sovereign Stack: Local Inference on Apple Silicon

For IP protection and privacy, running models locally on M3/M4 Max chips (Unified Memory) is the standard.

- Ollama: The CLI standard for running local models (like Docker for LLMs).

- LM Studio: A GUI alternative for discovering and testing models.

- Top Open-Source Models:

- DeepSeek-Coder-V2: Uses Mixture-of-Experts (MoE) for high reasoning with efficient inference; ideal for logic-heavy tasks.

- Qwen 2.5 Coder: The premier open-source choice for daily coding, rivaling GPT-4 in benchmarks (88.4% HumanEval) and running on 32GB+ RAM.

Career Engineering & Strategic Presence

Interview Intelligence (The “Copilot” Era)

- Final Round AI: Real-time “Interview Copilot” offering transcription and live hints.

- InterviewBee AI: Adaptive mock interviews that dynamically adjust difficulty.

Strategic Networking & Personal Branding

- Supergrow: LinkedIn growth tool generating tone-matched content from past posts.

- Taplio: Identifies viral technical topics and drafts high-visibility posts.

Performance Engineering (Automated Brag Docs)

- Lattice AI: Auto-drafts reviews, translating engineering metrics into business impact narratives.

- Fellow: Auto-generates “Brag Docs” by tracking wins from 1:1s year-round.

Public Speaking & Leadership Intelligence

Voice Cloning & Auditory Feedback

- ElevenLabs Voice Cloning: Clones your voice to objectively audit delivery and identify awkward phrasing before speaking.

- Orai: Pocket AI coach analyzing recordings for filler words, energy, and clarity.

Simulation & Real-Time Coaching

- Yoodli: Simulation platform providing private analytics on eye contact and pacing during practice speeches.

- Poised: Real-time meeting assistant offering live, private feedback on speaking speed and confidence.

- VirtualSpeech: VR-based training for practicing presentations in realistic 3D environments (e.g., boardrooms).

2. Wealth Management

For a Seattle engineer with RSUs and complex taxes, standard budgeting apps are insufficient.

The “Finance as Code” Approach (Privacy-First)

- Beancount: A Python-based double-entry bookkeeping system that stores financial records in plain text. It treats finance like code (version control, CI/CD).

- Fava: The web UI for Beancount.

- Fava Investor Plugin: Calculates IRR and tracks asset allocation across disparate accounts.

- Automation: Python scripts (e.g., wash-sale-tracker) can parse trade history to track wash sales and automate RSU vesting tracking.

The SaaS Optimization Route

- Prospect: Specialized for tech employees with ISOs/RSUs. It models tax implications of exercising options and calculates the “AMT crossover point” to prevent surprise tax bills.

- Compound Planning: Tracks net worth across illiquid assets and models scenarios for RSU vesting and tax cliffs.

- StockOpter: Specifically addresses equity compensation guidance and AMT modeling.

- HouseSigma: Essential for the Vancouver, BC market. Uses AI to provide “Sold” history and valuation estimates, unlocking data previously gated by realtors.

- VanPlex: Analyzes zoning data to identify “under-utilized” lots suitable for multiplex development (Bill 44), aiding in investment arbitrage.

Financial Education & Investment Intelligence

Tools for deep research, interactive learning, and risk-free simulation (Paper Trading).

Deep Research & Earnings Intelligence

- AlphaSense: Institutional “semantic search” for broker research and earnings calls; highlights off-script management answers.

- Quartr: Mobile access to live earnings calls/transcripts; “search across audio” finds specific keyword mentions instantly.

- FinChat.io: “ChatGPT for Finance” providing sourced answers from verified 10-Ks/10-Qs to minimize hallucinations.

Interactive Education & Family Literacy

- Magnifi: AI investing tutor; explains portfolio balance and stock fit via conversational interface rather than just charts.

- Zogo: Gamified literacy app for families; breaks complex topics into bite-sized modules with rewards.

Simulation & Practice (Paper Trading)

- Thinkorswim: Institutional-grade paper trading with “PaperMoney” to test strategies risk-free.

- Webull: UX-friendly platform for beginners to practice trading mechanics before deploying capital.

3. The Quantified Athlete: Physical Intelligence

AI is transitioning from logging data to providing active biomechanical coaching.

Rock Climbing

- Belay AI: Uses computer vision (pose estimation) on a smartphone to analyze center of mass and hip trajectory, identifying micro-inefficiencies in movement.

- KAYA Pro: Digitizes climbing sessions and calculates “Workload” to prevent overtraining. It filters “beta” videos by body morphology (e.g., finding beta for a specific height).

- Lattice Training: Uses datasets to benchmark finger strength and build periodized training plans.

- Crimpd: Utilizes analytics to prescribe hangboard workouts and manage training loads.

Muay Thai & Running

- Sensei AI: A virtual coach for Muay Thai that analyzes shadow boxing via camera. It corrects hip rotation on kicks and guard retraction.

- RunDot: The data scientist’s choice. Uses “Environment Normalization” to adjust pace targets based on heat/humidity, ensuring constant physiological stimulus.

- Runna: Focuses on UX and community, gamifying the training process for better adherence.

General Health & Longevity Intelligence

The intersection of “Quantified Self” and AI for preventative health and programmable biology.

Developer-Friendly Health Data

- Garmy: Python library and MCP server linking Garmin data to Claude Desktop or Cursor for agentic analysis.

- HealthGPT: Open-source iOS app using on-device LLMs to query Apple Health data privately.

Longevity & Preventative Analytics

- InsideTracker: “Programmable” biology platform; integrates wearable APIs and uses Terra AI to map blood biomarkers to peer-reviewed optimization protocols.

- Function Health: Deep clinical baseline with a 100+ biomarker panel for early detection and chronic disease prevention (closed system).

- Superpower: Accessible longevity diagnostics and biological age tracking at a subscription-friendly price point.

4. Creativity & Fun

Bridging the gap between software and physical artifacts.

3D Printing Stack

- OrcaSlicer: The “Open Source Victory” for 2025. Offers granular control (jerk/acceleration settings) and “Scarf Joint Seams” for aesthetics.

- Obico: AI failure detection (spaghetti detective). It monitors the print bed via camera and pauses prints to prevent fire/waste. Can be self-hosted on a Raspberry Pi.

- Zoo (formerly KittyCAD): “Text-to-CAD” API. Generates editable, parametric CAD models (code-based) rather than simple meshes.

- Meshy: Generates 3D assets from text prompts, best for rapid prototyping or game assets.

Knowledge & Blogging Pipeline

- Repo-to-Blog: A workflow using Gitingest or GitHub Actions to convert codebases into token-optimized summaries. These are fed into LLMs to automatically generate technical “DevLogs” from commit history.

- Obsidian + Smart Connections: A “Second Brain” setup where the plugin uses local embeddings to allow you to “chat” with your notes vault.

- llms.txt: A new standard for 2025. Adding this file to a personal site makes it indexable by AI agents.

Music Exploration & Vibe Matching

- Spotify AI Playlist: Generates playlists from creative text prompts (e.g., “songs for a rainy cafe”).

- PlaylistAI: Creates playlists from text prompts, images, videos, or festival posters.

- Maroofy: Search engine that matches songs by “audio vibe” rather than just artist similarity.

- Cyanite: Advanced “Free Text Search” to find songs matching specific moods or descriptors.

- Music-Map: Visual tool that creates a floating “cloud” of related artists based on fan affinity.

- NotebookLM: Google’s research tool; upload your concert history/venue calendars to create a custom event finder.

- HyperWrite: AI agent capable of browsing the web to find specific tickets or venue schedules for you.

5. Life Logistics

Tools acting as “Chief of Staff” for the household.

- Ohai.ai: Ingests unstructured data (screenshots of flyers, voice memos) to manage family calendars and conflicts.

- Milo: An SMS-first family assistant (powered by GPT-4) that manages logistics via natural conversation.

- HomeZada: A digital home management platform that uses AI to predict maintenance costs, schedule seasonal repairs.

- Familymind: Synthesizes school PDFs and sports schedules into a master calendar.

- Layla & Wanderlog: AI travel agents. Layla handles discovery/booking; Wanderlog optimizes daily routes and logistics while traveling.

- Magic School: Generates personalized tutoring content and educational activities.

Don’t Outsource Your Thinking: Why I Write Instead of Prompt

November 23, 2025 AI, Blog, Opinion, Personal No comments

Original content by Andriy Buday

I’ve been asked multiple times about my writing process, how I keep consistency, and why I write blog posts at all. Who in their right mind spends multiple hours weekly to write when there are LLMs that generate the same quality text within a minute? Let me share my secrets and answer these 4 questions:

- Why do I write at all?

- Isn’t it all just a waste of time because of LLMs?

- What’s my writing process?

- How do I stay consistent?

Image credit: Gemini Nano Banana Pro. I admit the image is cheesy, lol, but it’s also fun.

Why do I write at all?

Writing is structured thinking

Over time I confirmed to myself that ‘writing is thinking’ but also unlike speaking or thinking in my head writing is a structured way of thinking. You get the privilege of ‘parking’ some thoughts for further elaboration, you get the privilege to validate your thoughts with external research, you get all the privilege to mold things and shuffle them around, decompose and synthesize again.

Writing is a transferable skill

Undeniably writing is a skill, but, in my opinion, it is a transferable one. By working on improving my process of writing it becomes easier for me to write documents at work and the easier for me it becomes to write my personal documents (financial planning, goal setting, emails, etc). The more I write the easier it is to overcome that initial ‘hurdle’ of starting a new document. I am a doc producing machine at work: meeting notes – I’ve got it; short design doc – I’ve got it; just documenting my work trip – I’ve got it; producing ‘announcement’ document – I’ve got it. None of it seems daunting. I also wrote a post on “Why documenting everything you do at work matters” believing it is beneficial for your career, especially performance reviews and promos.

Isn’t it all just a waste of time because of LLMs?

Producing vs. Consuming

Here is a big secret, my dear readers: I’m writing mostly for myself, and I have a strong argument why it is worth my time instead of just prompting LLMs. For the sake of argument, I just kicked-off Gemini’s ‘Deep Research’ on the topic of tech writing, answering 4 questions from above. I’m confident that in ~3 minutes I will have a PhD level research paper on this topic. What do I gain from that research? What do you gain from that LLM research? Well, we become consumers – I can read that research paper and, for sure, that will have many punchy arguments and external pointers to like 100+ websites to learn from, but this trains our “info => brain” path, this does not train our “brain => info synthesis” path. Very specifically, next time when you need to produce new information, your retrieval/producing ‘paths’ in your brain are not trained for that.

Numbers

Let’s also do some numbers to see the worthiness of this activity:

- Range of 2-5 hours per week writing blog posts. It is closer to 2h for writing itself like this post and closer to 5h for larger technical/coding posts.

- 370 blog posts so far.

- 800 comments with praise/admiration and additional insights I was missing.

- Only 3k pageviews/month and only 50 mail subscribers.

- I have no ad income (I made some <200$ in the past as an experiment).

- In a way, the blog is an ‘Ad’ of myself.

- Up-scalling my tech writing skills.

- Hardly measurable influence on my career growth, but it’s definitely there.

What’s my writing process?

Sourcing Topics and Info

To be honest, at times it is very challenging to come up with new blog post ideas and even when I have an idea expanding on it is also quite a tedious process. I have a “blog post ideas” document which just sits there in my Google docs. Whenever something crosses my mind I would add it there. Another source of ideas is just some question I would get from someone either at work or in my personal conversations. For instance, this blog post was inspired by a person asking about my writing process as he was struggling a bit with writing some roadmap/design document at work. I hear you. This blog post is for you.

Writing Process Itself

At very early stages I usually start with just pouring thoughts and ideas in raw, unfiltered, and very unstructured ways. This is just the expansion step of my framework of dealing with ambiguity. At this stage focusing on quality, perfection, structure is counter-productive. If this is a technical design document, then some template for structure is usually already given, so that ‘pouring’ thoughts happens in compartments. Then, once I have lots of unstructured thoughts, I do more of research, I try to find key points and rephrase where needed, this is where trimming also happens. At later stages I would use LLMs to help me out, but I am generally against using LLMs for everything, and definitely not using for my blog writing. At work, generating summary or bullet points or initial structure is definitely easier with LLMs, and it would be a mistake not to use it.

LLMs

Yeah, I do use LLMs – but not for writing or structuring my thoughts but for other purposes. The main one: finding blind spots in my thinking. I have made many profound realizations of missing some key arguments thanks to LLMs, not only that, even in my personal life I came to realize that there are things I perceive simply differently to other people – eye opening. Another use of LLM is to suggest refinements to text, but not so much proof-reading, unless this is obvious typo catches. Honestly, sometimes, I just cannot stand all this ‘sophisticated flowery’ text generated by LLMs. When I see people write ‘significant impact drastically improving leverage of comprehensive coverage of’ – I know it is LLM and it sucks. You can know these are my own words, because LLMs avoids confrontation. Another way I’m using LLM for my writing is coming up with a common theme in my thinking and generating ideas for the best title.

Focusing on Experience and own Opinions

In the light of LLMs I found it to be ever more important to focus on my own experiences, strong opinions, and on building my own personal expertise. That’s the main distinguishing factor. No LLM has my brain or knows my thoughts.

How do I stay consistent?

Connect Inspiration Periods in One Continuous Chain

This is another question I get quite often. The answer: Consistency is Hard. The way it works for me is a multi-year habit building. I failed many times and I had to re-start it over and over again until it actually started working in a connected chained manner. The analogy I give is from rock climbing: to climb a mountain you connect multiple single pitch climbs. We get inspired at times (new year resolution, someone inspires you) and then you do a few iterations and then you fail. I say: get inspired more often, on purpose, and then connect into a continuous chain! Get inspired by books, people you admire, your mentors, your super-smart colleagues, someone you love, and last but most importantly, your future self!

Conclusion

Writing is not always just the output, but also the process. I see writing as “gym for the brain”. For sure, LLMs can lift weights for you, but that won’t make your thinking and writing stronger. It is like watching fit and strong people on Instagram and wishing you will be one like them without doing anything. The world is dominated by consumption and in my opinion being able to structure your thoughts and clearly articulate is something that will make you stand out.

APPENDIX: Additions by Gemini 3 PRO LLM

Below are some interesting extracts from the 17 page ‘Research Paper’ produced by Gemini on my ‘deep research’ request about technical blow writing and from analysis of the above text:

Blind spot: The “Quality” Trap

You mention LLMs generate “flowery” text that sucks. While often true, advanced prompting can mimic style fairly well. Suggestion: Strengthen the argument that the value isn’t the final text, but the struggle to produce it. Even if an LLM wrote a perfect post in your style, it would still be a “waste” because you didn’t do the cognitive lifting to get there. It’s like sending a robot to the gym for you.

Luck Surface Area

Blogging increases an engineer’s “Luck Surface Area.” This concept suggests that the amount of serendipity (job offers, speaking invitations, consulting opportunities, co-founder inquiries) one encounters is directly proportional to the number of people who know what one is doing.

Beyond the internal mechanics of a current employer, blogging functions as a potent “sales aid” for the engineer’s broader career trajectory.2 Recruiters and hiring managers at elite firms increasingly value communication skills as a primary differentiator. According to industry data, a significant majority of recruiters prioritize communication skills, sometimes even above raw technical proficiency, because technical knowledge can be taught, whereas the ability to articulate complex logic is a rarer trait.

Linearization of Thought and Feynman Technique

The process of writing requires the linearization of thought. Code can be non-linear; it jumps between functions, modules, and asynchronous callbacks. Prose, however, must flow logically from premise to conclusion. This forcing function exposes gaps in understanding. As noted in the analysis of engineering blogging benefits, writing a blog post often reveals that the author does not understand the code as well as they thought they did. This aligns with the “Feynman Technique,” which posits that one does not truly understand a concept until one can explain it in simple terms to a layperson.

Transfer of Experience

However, LLMs struggle with context, nuance, and novelty. They cannot hallucinate genuine experience. They can explain what a circular dependency is, but they cannot explain how it felt to debug one at 3 AM during a Black Friday traffic spike, nor can they navigate the specific political and technical constraints that led to that dependency in the first place.

The value of human writing has shifted from Transfer of Information to Transfer of Experience. The “Small Web” movement is a reaction to this; it is a flight to authenticity. Readers are looking for the “red hot branding iron” of human personality—the idiosyncrasies, the opinions, and even the biases that signal a real person is behind the text.15 As AI content proliferates, the premium on “human-verified” knowledge increases.

Case Study: Gergely Orosz (The Pragmatic Engineer)

Gergely Orosz serves as the gold standard for the modern technical writer. His transition from engineering manager at Uber to full-time writer was built on a specific process 39:

- Crowdsourcing via Surveys: Orosz often gathers data before writing. For an article on “Developer Productivity,” he surveyed 75+ engineers and managers across the industry.39 This provides proprietary data that no LLM can access.

- Structured Workflow: He treats writing with the discipline of coding, using outlines and working with editors/publishers to force progress.41

- Mimicry: He openly advises starting by mimicking role models.42 If you admire a specific engineering blog, analyze its structure and replicate it until you find your own voice.

The Practical Ceiling: AI, Diminishing Returns, and Our Future

October 19, 2025 AI, Opinion No comments

I would like to discuss a dilemma between near science fiction predictions of development of AI and grounded practical applications of AI.

Gemini or any other LLM does NOT take credit for the contents of the blog post, though!

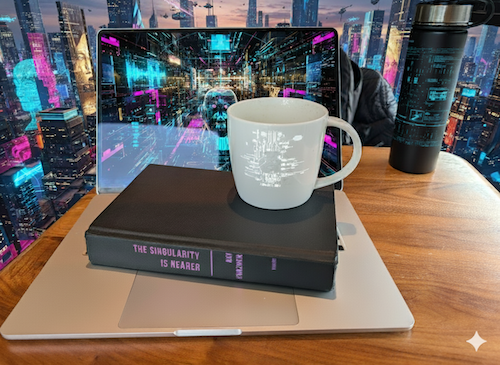

First of all, it is completely undeniable that AI is changing our lives and will have a transformative effect on the future. We can argue that humanity has lived through many transformative events over and over again: invention of fire, agriculture, writing, electricity, industrialization, information technologies, so AI can be seen as just one more invention on our part. Now, is AI really just one more invention or something that would absolutely change what it is to be a human as we know it? Is this our last invention?

I just finished reading the book “The Singularity is Nearer”. The book is arguing that we will eventually extend the capabilities of our biological brains and go beyond the limits of our organic bodies. At first we would come up with inventions that would greatly extend and improve our lives (reaching “longevity escape velocity” in mid 30s) and that we will build brain-computer interfaces (think of phones now, AR glasses or something of the like next, brain implants next, nanorobots next, with eventual consciousness upload to the information network). As another book “Homo Deus” (my review) argues – we eventually become god-like and gain the ability to control life and environment and Homo Sapiens go extinct. We might eventually lose our carbon-based existence and just become information.

To my way of thinking, while much of that, like nanorobots repairing our bodies, may sound like science fiction, as long as it doesn’t break the laws of physics I’m on board that it can and may happen.

Now, let’s look at some more practical examples.

- From transportation technology: Crossing the Atlantic would be 6 weeks sailing in the 1800s, 6 days in early 1900s with fast liners, 1950s – 8 hours by plane, 1970s – concorde doing that in <4h, and technically 90min possible from anywhere on planet to anywhere by rockets, but we are still flying boring 8h from London to NY.

- Military: We came up with ever larger nuclear bombs, but post “Tsar Bomba” in 1961 it simply doesn’t make sense to make any larger ones.

- Digital: digital camera resolution, audio quality, single processor’s clock speed, and many more examples where more has diminishing returns and becomes impractical.

This same pattern of hitting a practical limit is not just a historical curiosity because I can see it already happening in the world of AI. Let’s have a look at some examples:

- LLMs are now reaching the plateau of information saturation where they basically learned everything there is to learn from the internet.

- Vibe coding is mostly hype in my opinion. Yes, I do vibe-coding as well for fun, like my previous post about doing 3h multiplayer typing game, and it is a huge productivity booster, but I believe it fundamentally is like many other tools [post pending] – having logarithmic benefits – huge at the beginning and eventually ever more diminishing.

- Plateau in image recognition. This once was a grand challenge of computer science, and is now a largely solved problem for most practical purposes, but pushing models to 99.9% accuracy is not practical.

- Parameter count race. All those 7B to 70B to 1T parameter models. There is no point in multi-T models and the cost is just not worth it. I recently ran 7B LLM model on my mac air and it is not that bad at all.

My point is that in many individual fields where AI is applicable we will be reaching the some kind of optimal point between theoretical possibility and practical application. In the process we will be seeing major transformations, like the entire sector of jobs associated with driving will be replaced by self-driving vehicles. There is a good chance this could create socio-economic disruptions and ripple effects. Just imagine, some rich “haves” can give their child superpowers while some poor “have nots” could not afford that. But I agree to the point that this is only “in-transite”, because now people in some poor countries can afford a phone that would be multi-billion worth of technology if this was mid last century.

My own predictions are that:

- AGI is still very far away, a much longer time-frame than “The singularity is nearer” is arguing for. Maybe 10-30 years from now.

- Each and every AI application will reach its optimal practical point.

- Human lives will improve as they did with other technologies.

- Software engineering jobs won’t get extinct, but they will transform and we need to adopt.

- I will die, but someone born next century might not.

Vibe Coding & Designing Typing Multiplayer Game

October 11, 2025 AI, Fun 2 comments

The other day my daughter showed me the typing game her teacher encouraged kids to play. My daughter was impressed with my typing speed. This blog post is to impress her even more.

The game she played was online typing practice – you type text and compete with other players for speed and accuracy. Players are represented as racing cars. If you win races you qualify to higher league of players. In this post I want to do few things:

- Vibe code standalone JS file – you can try it out right in this blog post.

- Vibe design a full fledged online game.

- Vibe implement the backend for the multiplayer game.

Vibe Coding Typing Trainer

Here is the result of about 30 minutes of work. You can play it yourself (if reading from e-mail you may need to open the blog).

This was achieved with 13 prompts, summarized like this:

1. Initial project creation prompt

2. CORS issue fix request

3. Container class addition request

4. HTML demo update request

5. Simple version (typing.html) request

6. Visual version creation request

7. Error display duration adjustment

8. Error display fix attempt

9. Revert request for error display

10. UI enhancement with "click to activate" label

11. Visual version adjustment request

12. Final revert request

13. History documentation requestVibe Designing Multiplayer Game

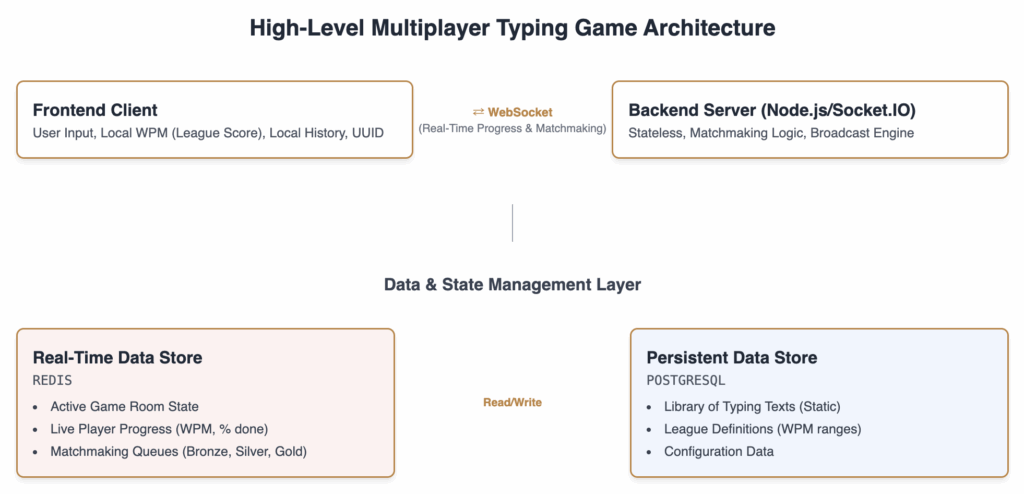

Now instead of working with Claude, I started working with Gemini to create a design for the multiplayer game.

Here is the high level system design document: opens in another page.

Given that I explicitly prompted it to be fully stateless, relying only on client side local history, no login and no other complications this seems to be a fairly good start. My prompt was:

Now I want you to create a comprehensive system design to build the game described above. We need:

- simple website with JS logic

- backend that can create rooms of gamers based on their levels

- the game should protect user privacy so there is no user info stored on backend

- game history is only stored as long as user has local cookies

- backend should handle at least 10k users

- single game has max 6 players

- if user wins the game they are placed into higher leagueAs a next step I fed the generated system design document back to Claude in Visual Code. This time I had to fight a lot more with the AI as it was running into issues connecting players but finally arrived at the multiplayer game:

Prompting history:

- Initial setup of multiplayer backend server

- Setup Node.js with Socket.IO and databases

- Create basic server structure

- Implementation of matchmaking system

- Create skill-based queue system

- Handle player matching logic

- Game room and state management

- Implement room creation and management

- Handle player synchronization

- Create typing texts table

- Add sample data

- Visual keyboard integration

- Add keyboard visualization to multiplayer version

- Implement key highlighting

The backend is powered by:

- Node.js

- Socket.IO (for real-time WebSocket communication)

- Redis (for server-side data/caching)

- PostgreSQL (for the database)Conclusion

I have complete confidence that if I had 2 or 3 days to spend on this I could actually create a game that can be put out there on some servers and be actually playable by actual human beings! The new world of AI is nuts. I’m out of my time allocated to blogging, but I’m convinced over and over again, that the old times of programming are over – the only way to survive is to adopt.

P.S. Another thought: I have a friend who works on Linux kernel stuff. You would imagine that hardcore stuff like that would not be affected by the era of AI, but no, he says that AI helps to properly review pull requests to the kernel and catch real issues, moreover it does help to build more complex things. Caveat, of course, is that the proper knowledge is still required. Who knows, if I knew nothing about programming, maybe, I wouldn’t be able to build this typing game so quickly? Or, maybe, this is just a matter of time?

Vibe Coding AI that learns to play Snake game

September 14, 2025 AI, Uncategorized No comments

Today I’m Vibe Coding something and inviting you to follow along. This is not a very serious post. The purpose of this blog post is just to see how quickly we can build a NN that plays snake game and if we can improve it:

Steps we we will follow:

- Generating simplest snake game you can play with WASD keys.

- Generating AI that learns to play and watch it play.

- Attempt to improve generated code so it reaches better scores.

Step 1: Generating simplest snake game you can play

Obviously, I need to write a prompt to generate such a game, but being lazy (and not knowing too much about IA) I offloaded prompt generation to GPT:

Generate a good prompt for copilot AI in Visual Code so it generates code for the snake game. Snake game can be the simplest possible terminal based game on a small field.The output was pretty reasonable prompt, which I could have written (but, hey, that takes time). The only thing I updated in the prompt was the very last line to keep track of the score of the game:

# Write a simple snake game in Python that runs in the terminal.

# Requirements:

# - Keep the game as simple as possible.

# - Use a small fixed grid (e.g., 10x10).

# - The snake moves automatically in the last chosen direction.

# - Use WASD keys for movement (no fancy key handling needed, blocking input is fine).

# - Place food randomly; eating food makes the snake longer.

# - The game ends if the snake runs into itself or the walls.

# - Print the field after each move using simple ASCII characters:

# - "." for empty space

# - "O" for snake body

# - "X" for snake head

# - "*" for food

# - Keep the code in a single file, no external libraries beyond Python standard library.

# - Keep it short and readable.

# - Keep the score of the game. The score equals the total number of food eaten.The generated code (with gpt-4o) was 73 lines of code and I could play the game in the terminal: https://github.com/andriybuday/snake-ia/blob/main/snake_game.py

Step 2: Generating AI that learns to play and watching it play

Again, prompt to get the prompt:

Now we need another prompt. This time we want to use pytorch and will be building a simple 2 hidden layers neural network with reinforcement learning. Use large punishment for loosing the game and small rewards for each eaten food. We want to achieve quick learning without too many iterations.

The prompt it generated this time was much more extensive. Here are all of the prompts: https://github.com/andriybuday/snake-ia/blob/main/README.md I then fed that prompt to both GPT-4o and Claude.

Claude generated a much better AI. GPT generated something that couldn’t even get more than one food score, which Claude was in the territory of 10-20 score. Note, that max theoretical score on 10×10 is 99. You can see above a gif showing last few epochs of training and game play of the Claude version.

The code for this version: https://github.com/andriybuday/snake-ia/blob/main/snake_game_ai_claude.py

Step 3: Improving AI so it reaches better scores

Ok, so what can be done to make this reach better scores? I asked GPT to recommend some improvements. It gave me general recommendations out of which I created a prompt for prompt:

Generate prompt I can give to Claude to improve performance of the Snake AI, potentially with these improvements: Change head to Dueling DQN, Add Double DQN target selection, Add PER (proportional, α=0.6, β anneal 0.4→1.0), Add 3-step returns, Add distance-delta shaping + starvation cap.To be honest, at this point I don’t know if these improvements make sense or not, but I took the generated prompt and fed it to Claude. And what I got was broken code, which crashes on the “IndexError: Dimension out of range”. I was hoping to run into something like this. Finally. Now I can probably debug the problem and try to find where we are running out of range, but no, I’m sharing the error and stack trace to Claude again. It was able to fix it BUT things got worse, the snake would run into infinite loops.

Turns out generated “upgraded” version is much worse. So I decided to take a different path and get back to simple first version and see what can be updated. The only things I did were increasing training time (# episodes), allowing for more steps for training, and slightly decreasing time penalty. This is the change: https://github.com/andriybuday/snake-ia/commit/796ad35924700dcb73ac6aaecf8df39ec8069940

With the above changes the situation was much better but still not ideal.

Conclusion

Sorry for the abrupt ending, but I don’t really have time to fine-tune the generated NN or create new models to achieve the best results. The purpose here was to play and see what we can get really quickly. Also another purpose of this post is to show that people, like me in this case, who just do Vibe Coding without knowing underlaying fundamentals cannot really achieve best results really quickly. Happy Vibe Coding!

Try it yourself:

git clone https://github.com/andriybuday/snake-ia.git

cd snake-ia

python3 -m venv venv

source venv/bin/activate

pip install -r requirements.txt

python snake_game_ai_claude.py

On AI and Adaptability: AI is an asteroid and your software engineering job is a dinosaur

August 24, 2025 AI 2 comments

AI is the asteroid. Your job is the dinosaur. The question is: will your career evolve like mammals, or go extinct like T-Rex? I’m not saying that software engineering jobs will disappear, I am saying they will transform from their current form. Dinosaur jobs are things like writing boilerplate CRUD. New mammal jobs are things like designing AI-integrated systems. There is a lot in between that evolves.

Tech companies are now piloting AI powered interviews where you have to build something using AI during the interview. Are you ready for such an interview? I’m definitely not ready. Would you be able to survive this change next time you are on the lookout for the new job?

These days, with AI, being productive as a software engineer is not the same. A bit of a challenge with AI tools is that they are all new and rapidly evolving. One day, writing good prompts is good skill, next day building AI agents to do the job for you is the next thing, one model is good at this, another one is good at that. The amount of things available is also quite overwhelming.

I remember at one point in my career I felt I got really good at using Visual Studio with Resharper, so good that it actually felt like a significant differentiator in my speed compared to others. Then when I had to switch to other tools/tech (frontend, java, aws, other IDEs, etc) it felt unnatural and was leveling the playing field or placing me at disadvantage compared to people who already knew how to use the other tools. At the same time, the more I had to learn new tools the easier it was to switch the next time.

Adaptability is probably one of the best skills to work on during this rapid evolution in tech. We simply cannot afford to ignore AI, that would be the biggest career mistake you could make right now.

And to make one more point very clear: I believe that software engineering requires strong fundamental knowledge that doesn’t change: understanding of how computers work and interact with each other, understanding of how software runs, algorithms. There will always be a need to figure out how to translate business needs into these fundamental concepts, it is just that the translation tooling landscape is changing and we need to get good at them.

The asteroid has already hit. Your career’s survival depends on adaptability and fundamentals. Learn fast, stay curious, and don’t bet your career on yesterday’s tools. I’m writing this as much for myself as for you. I need to step up a lot.

What is a new AI tool/concept you learned last month?

(for me it was about the architecture of AI agents, incl. MCP protocol)

Is AI Redefining Software Craftsmanship?

August 2, 2025 AI, RandomThoughts No comments

Let’s debate over the question: Is AI redefining what software craftsmanship is?

To answer this question we must first define and expand on what software craftsmanship is.

Software Craftsmanship (SC) can be defined as a mindset and approach to creating software that puts emphasis on quality, elegance, adherence to best practices, and continuous skill development.

Many ideas behind SC are shaped by books, like “Clean Code”, “The Pragmatic Programmer”, “Code Complete” and more. I’ve personally read these books in the past and have considered myself to be sort of a Software Craftsman, mainly because of taking pride in producing high quality code.

Let’s explore the “Yes” argument with an example. In my 2012 blog post “100% code coverage – real and good!” I argued that striving for absolute test coverage is not only realistic but professionally responsible saying it pays off in the long run. Recently, I worked on logic that needed new unit tests. AI generated over 100 tests for me, covering all the edge cases. It saved me tons of hours of work. I now treat these tests as a black-box safety harness. If I change the logic and introduce a bug, I expect one of them to fail. If I need to refactor heavily or modify API signatures, I simply ask AI to regenerate the tests. I no longer care if helper methods in the tests are extracted or follow perfect conventions because that’s now a solved problem. So, yes, AI is redefining what a software craftsman does.

Let’s explore the “No” argument. A colleague of mine, gave this example: in the privacy space, AI can generate some “good” code, but it might not go as far as to care about whether using a raw pointer in C++ code is higher risk because of the privacy context, and if you are not a SC you would simply not pay attention to that part and let it slip, similarly how I would not care about that extracted method in unit tests. So the argument goes, that AI cannot truly produce SC’s level of quality. Playing a bit of devil’s advocate, I think, AI will actually get good at caring about raw pointers, extracted methods, and other things like that. Perhaps we’re not replacing craftsmanship but rather we’re just shifting it to a higher level of abstraction.

To finish off, there was a time when people wrote in absolute binary (01110110) using absolute machine addresses and many programmers of that time resisted using symbolic approaches (like FORTRAN). The adoption by professionals was slow, because, hey, that’s “not true programming”. To replace another popular statement:

Software craftsmen won’t be replaced by AI, but those who use AI will replace those who don’t.

P.S. The idea for this post originated from a random conversation over dinner with a random co-worker I’ve never met before.

AI: From Skeptic to Power User to (Re) learner

July 13, 2025 AI No comments

I, mistakenly, have never given the entire AI trends that much consideration and even at one point suggested that it might be one of those overhyped technology trends that will fade away with time (like AR, Bitcoin, etc), . This post is about some of my personal experiences that made me reconsider this. This post is NOT written with LLM, though, lol.

University Years

My first ever experience actually doing something AI related, was during my studies around 2007-8, in fact, I did have quite a few AI courses at the university, including building a simple NN framework and even visualizing its internal layers structure and learning process with backpropagation. I didn’t give it much thought back then. It seemed to be quite a niche technology and just part of studies. I could see it classified some data I fed it with and saw how my classmate used it to recognize numbers from car number plates.

First Jobs

After that, for quite a long time, it hasn’t really shown on my radar that much. I guess I might have inadvertently used some tools that were utilizing some ML algorithms, like I remember using some open source library for a prototype project to match images. At Amazon I knew some teams that were working on AI related things, such as brand protection, recommendations, but never really worked on anything AI myself. Google is known to have been on the forefront of using AI long before anyone else. Advertising at Google has been running ML models for a very long time and I had to support their efforts by working on an experimentation platform that allowed those teams to verify their hypothesis and slowly roll out new models to the world.

Rasing Trend

During my years at Google, AI has risen in its popularity. Big tech companies started to invest extremely heavily into AI (Pichai-AI meme), oftentimes pushing for efficiency and cost cutting at one end, and at the same time expanding operations on the other end. I think it was also the time when I started making use of GenAI a lot more. More and more tools started to be available, coding has become somewhat easier, doing some summarizations has become easier and so on. Since I moved to META early 2025, the entire AI trend continues, and it’s clear that META is very aggressive in hiring top AI talent (media coverage). There is more and more interaction with ML at work, some of the projects my team is driving are to integrate with ML platforms, etc.

Crossing Personal Mental Threshold of Usefulness

My main reservation with using LLMs was that I usually felt the quality of results it produced did not justify the effort I put into prompting it. Especially given I always had to correct the result. Though, I believe this changed.

Last week I had to write a few new classes in C++, that would evaluate some expressions from configs, so instead of adding files manually, I just talked to AI, “hey, create me this and that and make sure interface has this signature”, “hey, add a UT class”, “hey, update dependencies”, and then it actually did a very fairly good job at all of that, not perfect, but really good enough to save me time. This is when realization came to me, this is now crossing that personal threshold I had in mind. It is more useful and worth a bit of effort fighting with it.

On a more personal front, recently, I wanted to replan some of my life goals, learning strategies, so I made heavy use of GPT and it’s just astonishing how good it has become at reasoning, structuring things, and actually producing what I want. I’m now a paid subscriber of GPT and am trying to use it more like a true personal assistant. I did use it before for financial advise, travel planning, summarization, etc, etc.

Last night, I was like, how about I ask GPT to learn something together, so I asked, “let’s create an AI learning plan, here is my background: …., make it personalized”. “AI refresher” was on the first week with suggested deliverables of building a small convolutional neural network on the CIFAR-10 data set. So… drum-roll, I asked it to build a notebook with code for all of it and it produced a bunch of code, which I followed up pasting into Colab, training the model and verifying its accuracy. It is just mind boggling how in just 20 minutes or so, I can build some stuff that would have taken weeks not too long ago, plus if there were things I didn’t understand I could clarify and it gave me really good answers.

One other thing, everybody knows, LLMs are good at travel planning. I usually prefer to plan everything myself and just get starting point from GPT, but this time we wanted to go camping spontaneously, so I asked LLM, “Lookup campgrounds within 2 hours drive from Seattle, that have plenty of first come first serve spots, access to lake, with activities including paddleboarding and biking. Create a list of 5 campgrounds with a short description.” – so it basically did all of the Googling for me based on that prompt, provided pointers to sources, etc, etc. Mindboggling.

One other thing I asked a specialized AI tool to do was to generate a 3D model to print. It did fairly good job – that’s it – giving in.

Where I think it can still be much better

There are still a few things that I want it to be better at. For example, being less of a “yes man”. Contradicting what LLMs say makes them change their mind and say “yes, you are absolutely correct, let me update the answer”. Other things are: better reasoning, understanding context even better, etc. Arguably, this would be a very tricky challenge for LLMs to be like true humans, but it appears we are definitely on that direction.

Conclusion

For me personally, LLMs and AI have now clearly crossed the threshold of being not just good enough—but genuinely useful. The time and effort it takes to engage with them are now well worth the return. Whether it’s writing code faster, mapping out life goals, or planning a camping trip, the tools have become practical enough to build into daily routines.

Having finally “given in” to their usefulness, I’m also embracing AI: having fresh curiosity and investing time to study it deliberately. It feels like the right moment to not just use the technology—but to understand it, shape how I interact with it, and grow with it.